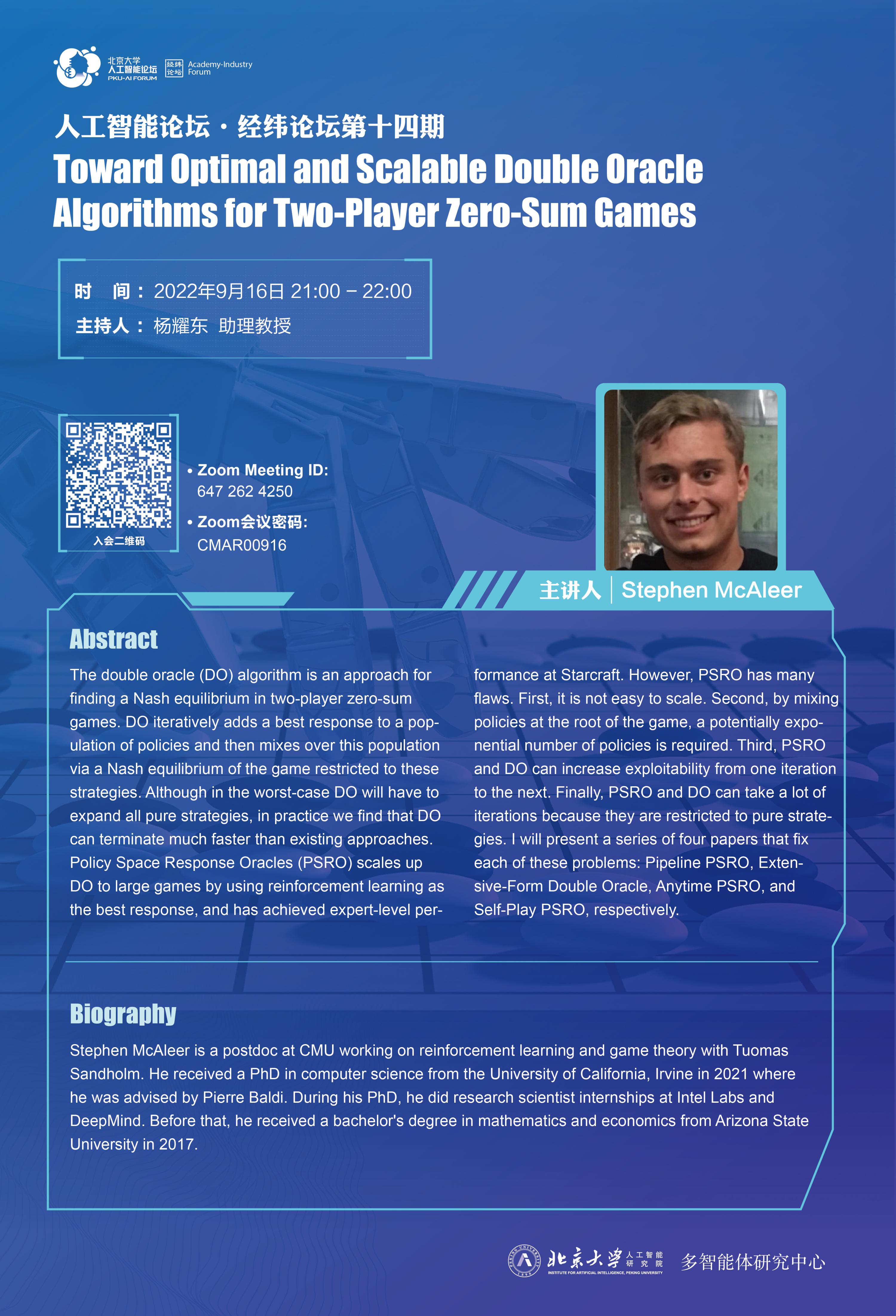

报告人:Stephen McAleer

时 间:2022/09/16 下午21:00

主持人:杨耀东 助理教授

Abstract

The double oracle (DO) algorithm is an approach for finding a Nash equilibrium in two-player zero-sum games. DO iteratively adds a best response to a population of policies and then mixes over this population via a Nash equilibrium of the game restricted to these strategies. Although in the worst-case DO will have to expand all pure strategies, in practice we find that DO can terminate much faster than existing approaches. Policy Space Response Oracles (PSRO) scales up DO to large games by using reinforcement learning as the best response, and has achieved expert-level performance at Starcraft. However, PSRO has many flaws. First, it is not easy to scale. Second, by mixing policies at the root of the game, a potentially exponential number of policies is required. Third, PSRO and DO can increase exploitability from one iteration to the next. Finally, PSRO and DO can take a lot of iterations because they are restricted to pure strategies. I will present a series of four papers that fix each of these problems: Pipeline PSRO, Extensive-Form Double Oracle, Anytime PSRO, and Self-Play PSRO, respectively.

Biography

Stephen McAleer is a postdoc at CMU working on reinforcement learning and game theory with Tuomas Sandholm. He received a PhD in computer science from the University of California, Irvine in 2021 where he was advised by Pierre Baldi. During his PhD, he did research scientist internships at Intel Labs and DeepMind. Before that, he received a bachelor's degree in mathematics and economics from Arizona State University in 2017.